What technology risks are faced by people who experience intimate partner violence? How is the security community failing them, and what questions might we need to ask to make progress on social and technical interventions?

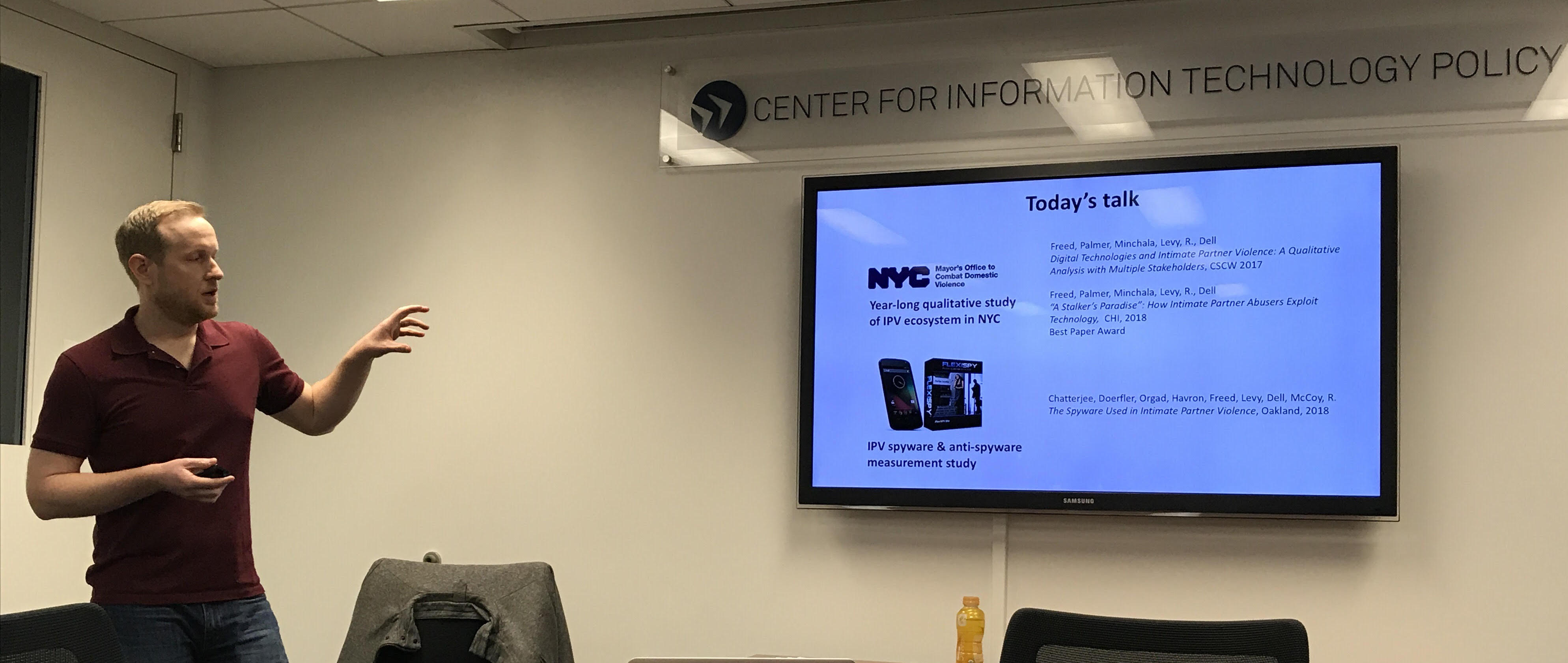

Speaking Tuesday at CITP was Thomas Ristenpart (@TomRistenpart), an associate professor at Cornell Tech and a member of the Department of Computer Science at Cornell University. Before joining Cornell Tech in 2015, Thomas was an assistant professor at the University of Wisconsin-Madison. His research spans a wide range of computer security topics, including digital privacy and safety in intimate partner violence, alongside work on cloud computing security, confidentiality and privacy in machine learning, and topics in applied and theoretical cryptography.

Throughout this talk, I found myself overwhelmed by the scope of the challenges faced by so many people– and inspired by the way that Thomas and his collaborators have taken thorough, meaningful steps on this vital issue.

Understanding Intimate Partner Violence

Intimate partner violence (IPV) is a huge problem, says Thomas. 25% of women and 11% of men will experience rape, physical violence, and/or stalking by an intimate partner, according to the National Intimate Partner and Sexual Violence Survey. To put this question in context for tech companies, this means that 360 million Facebook users and 252 million Android users will experience this kind of violence.

Prior research over the years has shown that abusers are taking advantage of technology to harm victims in a wide range of ways, including spyware, harassment, and non-consensual photography. In a team with Nicki Dell, Diana Freed, Karen Levy, Damon McCoy, Rahul Chatterjee, Peri Doerfler, and Sam Havron, Thomas and his collaborators have working with the New York City Mayor’s office to Combat Domestic Violence (NYC CDV).

To start, the researchers spent a year doing qualitative research with people who experience domestic violence. The research that Thomas is sharing today draws from that work.

The research team worked with the New York City Family Justice Centers, who offer a range of services for domestic violence, sex trafficking, and elder abuse victims– from civil and legal services to access to shelters, counseling, and support from nonprofits. The centers were a crucial resource for the researchers, since they connect nonprofits, government actors, and survivors and victims. Over seriesof year-long qualitative studies (see also this paper), researchers held 11 focus groups with 39 women who speak English and Spanish from 18-165. Most of them are no longer working with the abusive partner. They also held semi-structured interviews with 50 professionals working on IPV– case managers, social workers, attorneys/paralegals, and police officers. Together, this research represents the largest and most demographically diverse study to date on IPV.

Common Technology Attacks in Intimate Partner Violence Situations

The researchers spotted a range of common themes across clients of the NYC CDV. They talked about stalkers who accessed their phones and social media, installed spyware, took compromising images through the spyware, and then impersonating them to use the account to send compromising, intimate images to employers, family, and friends. Abusers are taking advantage of every possible technology to create problems through many modes. Overall, they identified four kinds of common attacks:

- In ownership-based attacks, the abuser owns the account that the victim is using. This gives them immediate access to controlling the device. Often people will buy a device for someone else to gain a foothold in that person’s life and home.

- In account/device compromise, someone compels, guesses, or otherwise compromises passwords.

- Harmful messages or posts involve calling/texting/messaging the victim. This involves harassing a victim’s friends/family, and sometimes encouraging other people to harass that person by proxy.

- Abusers also exposed private information: blackmailing someone by threat of exposure, sharing non-consensual intimate images, and creating fake profiles/advertisements for that person on other sites.

In many of these cases, abusers are re-purposing ordinary software for some kind of unhelpful purpose. For example, abusers use two-factor authentication to prevent victims from accessing and recovering access to their own account.

Non-Technical Infrastructures Aren’t Helping Victims & Professionals with Technical Issues

Thomas tells us that despite these risks, they didn’t find a single technologist in the network of support for people facing intimate partner violence. So it’s not surprising that these services don’t have any best practices for evaluating technology risks. On top of that, victims overwhelmingly report having insufficient technology understanding to deal with tech abuse.

Abusers are typically considered to be “more tech-savvy” than victims, and professionals overwhelmingly report having insufficient technology understanding to help with tech abuse. Many of them just google as they go.

Thomas also points out that the intersection of technology and intimate partner violence raises important legal and policy issues. First, digital abuse is usually not recognized as a form of abuse that warrants a protection order. When someone goes to a family court, they have to convince a judge to get a protection order- and judges aren’t convinced by digital harassment– even though the protection order can legally restrict an abuser from sending the message. Second, when an abuser creates a fake account on a site like Tinder and creates “come rape me” style ads, the abuser is technically the legal owner of the account, so it can be difficult to take down the ads, especially for smaller websites that don’t respond to copyright takedown requests.

Technical Mechanisms are Failing Too: Context Undermines Existing Security Systems

Abusers aren’t the sophisticated cyber-operatives that people sometimes talk about at security conferences. Instead, researchers saw two classes of attacks: (a) UI-bound adversaries: an adversarial but authenticated user who interacts with the system via the normal user interface, and (b) Spyware adversaries, who installs/repurposes commodity software for surveillance of the victim. Neither of these require technical sophistication.

Why are these so effective? Thomas says that the reason is that the threat models and the assumptions in the security world don’t match threats. For example, many systems are designed to protect from a stranger on the internet who doesn’t know the victim personally and connects from elsewhere. With intimate partner violence, the attacker knows the victim personally, they can guess or compel disclosure, they may connect from the victim’s computer or same home, and may own the account or device that’s being used. The abuser is often an earner who pays for accounts and devices.

The same problems apply with fake accounts and detection of abusive content. Many fake social media profiles obviously belong to the abuser but survivors are rarely able to prove it. When abusers send hurtful, abusive messages, someone who lacks the content may not be able to detect it. Outside of the context of IPV, a picture of a gun might be just a picture of a gun- but in context, it can be very threatening.

Common Advice Also Fails Victims

Much of the common advice just won’t work. Sometimes people are urged to delete their account. You can’t just shut off contact with an abuser- you might be legally obligated to communicate (shared custody of children). You can’t get new devices because the abuser pays for phones, family plan, and/or children’s devices (which is a vector of surveillance). People can’t necessarily get off social media, because they need it to get access to their friends and family. On top of that, any of these actions could escalate abuse; victims are very worried about cutting off access or uninstalling spyware because they’re worried about further violence from the abuser.

Many Makers of Spyware Promote their Software for Intimate Partner Surveillance

Next, Thomas tells us about intimate partner surveillance (IPS) from a new paper led by Diana Freed on How Intimate Partner Abusers Exploit Technology. Shelters and family justice centers have had problems where someone shows up with software on their phone that allowed the abuser to track them, kick down a door, and endanger the victim. No one could name a single product that was used by abusers, partly because our ability to diagnose spyware from a technical perspective is limited. On the other hand, if you google “track my girlfriend,” you will find a host of companies that are peddling spyware.

To study the range of spyware systems, Thomas and his colleagues used “snowball” searching and used auto-complete to look for other queries that other people were searching. From a set of roughly 27k urls, they investigated 100 randomly sampled URLs. They found that 60% were related to intimate partner surveillance: how-to blogs, Q&A forums, news articles, app websites, and links to apps on the Google Play Store and the Apple App Store. Many of the professional-grade spyware providers provide apps directly through app stores, as well as “off-store” apps. They labeled a thousand of the apps they found and discovered that about 28% of them were potential IPS tools.

The researchers found overt tools for intimate partner surveillance apps, as well as systems for safety, theft-tracking, child tracking, and employee tracking that were repurposed for abuse. In many cases, it’s hard to point to a single piece of software and say that it’s bad. While apps sometimes purport to provide services to parents to track children, searches for intimate partner violence also surface paid ads to products that don’t directly claim to be for use within intimate partners. Ever since a ruling from the FTC, companies work to preserve plausible deniability.

In an audit study the researchers emailed customer support for 11 apps (on-store and off-store) posing as an abuser. They received nine responses. Eight of them condoned intimate partner violence and gave them advice on making the app hard to find. Only one indicated that it could be illegal.

Many of these systems have rich capabilities: location tracking, texts, call recordings, media contents, app usage, internet activity logs, keylogging, geographic tracking. All of the off-store systems have covert features to hide the fact that the app is installed. Even some of the Google Play Store apps have features to make the apps covert.

Early Steps for Supporting Victims: Detecting Spyware

What’s the current state of the art? Right now, practitioners tell people that if your battery runs unusually low, they may be a victim of spyware– not very effective. Do spyware removal tools work? They had high but not perfect detection rates for off-store intimate-purpose surveillance systems. However they did a poor job at detecting on-store spyware tools.

Thomas recaps what they learned from this study: There’s a large ecosystem of spyware apps, the dual use of these apps creates a significant challenge, many developers are condoning intimate partner surveillance, and existing anti-spyware technologies are insufficient at detecting tools.

Based on this work, Thomas and his collaborators are working with the NYC Mayor’s office and the National Network to end Domestic Violence to develop ways to detect spyware, to develop new surveys of technology risks, and find new kinds of interventions.

Thomas concludes with an appeal to companies and computer scientists that we pay more attention to the needs of the most vulnerable people affected by our work, volunteer for organizations that support victims, and develop new approaches to protect people in these all-too-common situations.