A Belgian university recently banned all watches from exams due to the possibility of smartwatches being used to cheat. Similarly, some standardized tests in the U.S. like the GRE have banned all digital watches. These policies seems prudent, since today’s smartwatches could be used to smuggle in notes or even access websites during the test. However, their potential use for cheating goes much farther than that.

As part of my undergrad research at the University of Michigan, I’ve recently been focusing on the security and privacy implications of wearable devices, including how smartwatches might be used for cheating in an exam. Surprisingly, while there’s been interest in the security implications of wearable devices, the focus within the research community has been on how these devices might be attacked rather than on how these devices challenge existing social assumptions.

ConTest: A Smartwatch App for Collaborative Cheating

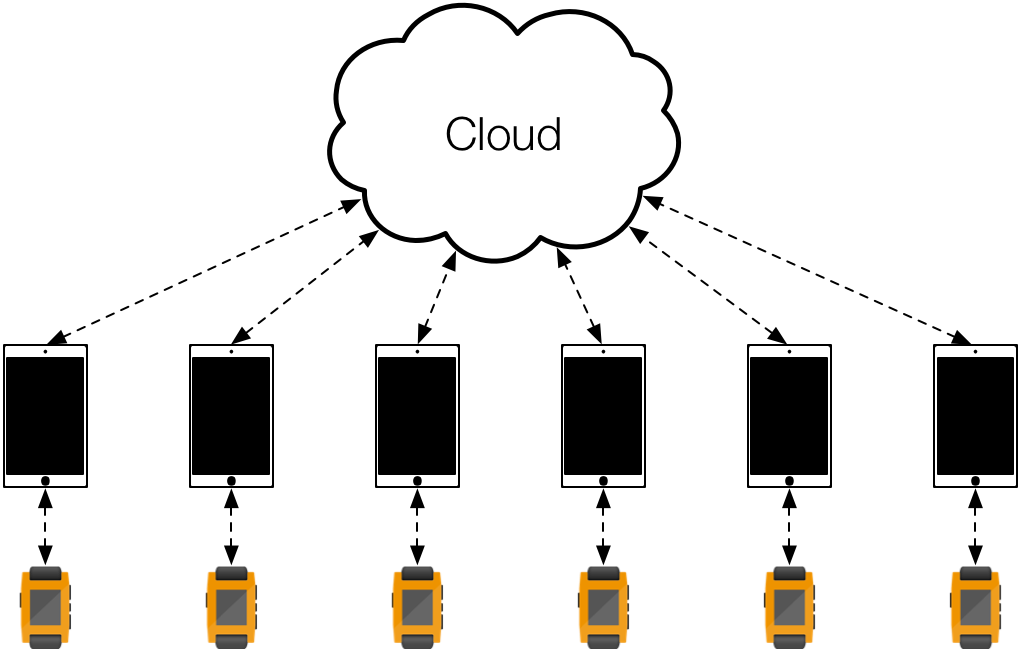

As a proof of concept, I developed ConTest, an application for the Pebble smartwatch that shows how students could inconspicuously collaborate on multiple-choice exams in real time. ConTest allows students to select a question, vote on answers, and view the most popular solution based on all of the responses from other students taking the exam. Prior to an exam, students pair their watches with their smartphones and choose the exam that they are taking. During the exam, the smartphone—hidden in the student’s pocket or backpack—facilitates communication between the smartwatch and a cloud-based aggregation service. All user interaction during the exam takes place on the smartwatch itself with simple, inconspicuous button presses.

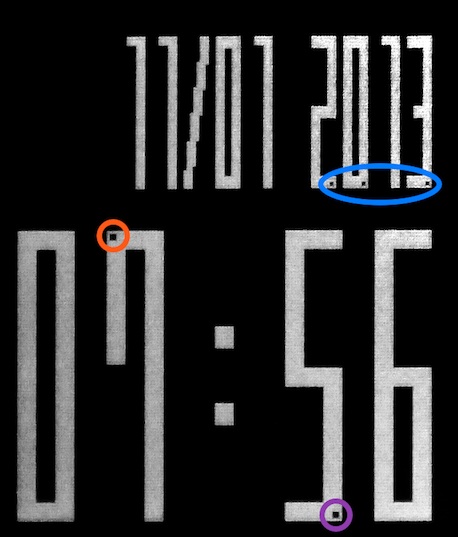

ConTest demonstrates how hard such an application can be to detect. It displays the question number and answer by inverting a small number of pixels in digits of the time and date. For example, in the figure below, the red-circled block of missing pixels in the seven indicates that the user has voted for answer B. The purple-circled block of pixels in the five indicates that the most popular answer selected by other users is D. Similarly, the question number is encoded with missing pixels in the top date digits using a binary encoding.

Although users can see this interface at close range, it’s practically invisible from more than a couple of feet away, and the cheating application looks just like a regular watch face.

Disrupting Security Assumptions

The obvious solution for preventing students from cheating using smartwatches is to ban watches from exams, just as Artevelde College in Belgium recently did. But the devices will continue to evolve, both decreasing in size and detectability, and increasing in capability and ubiquity. Future form factors are likely to be even less conspicuous and enable more unique attacks—think smart contact lenses or implantable smartphones. Outright bans may not be desirable or even feasible. In the long run, we will need to adapt in more drastic ways, perhaps by abandoning traditional exams as a form of student assessment.

While wearable devices offer an exciting platform for new types of applications, they also upend implicit security assumptions that are built into many everyday social contexts. Testing centers assume that watches don’t talk to the Internet; casinos assume that eyeglasses aren’t heads-up displays. ConTest demonstrates that even today’s technology challenges present threat models. The time has come for the research community to start considering the attack vectors introduced by this new class of technology, and for all of us to start adapting our assumptions and threat models based on an awareness of such devices.

If you’re interested in a more detailed discussion about ConTest and the security implications of smartwatches, see this technical paper I coauthored with Zakir Durumeric, Jeff Ringenberg, and J. Alex Halderman.

Maybe it’s time to re-think exams. Learning is not just memorizing facts, it’s not only accomodation or assimilation, it’s not only cognition – maybe the exams could allow new technology being used, and maybe even books. Maybe you could form teams.

Maybe the test itself could be part of the learning process. And not the end of it, like it usually is.

Fascinating and troubling at the implications, Alex!