[Editors note: The New York Times weighed in with “When the Web’s Chaos Takes an Ugly Turn“, which includes several quotes from Tufekci.]

Reddit may be the most important Internet forum that you have never heard of. It has more than a billion page-views a month, originates many Internet memes, brilliantly exposes hoaxes, hosts commentary on everything ranging from the trivial to the most serious–and it is the forum that President Barack Obama chose for his “ask me anything” session. Part of Reddit’s success has been due to it’s “live and let live” ethos in sub-forums, called “subreddits.” These sub-forums are created and moderated by volunteers with little or no interference from Reddit, whose parent company is the publishing conglomerate Condé Nast. This delegation approach facilitates Reddit’s business model, allowing it to operate with a comparatively small paid staff. However, the sub-forums that have flourished under this model are at times predatory and disturbing. For instance, “jailbait” was dedicated to sexually suggestive pictures of minors, and “creepshots” specialized in nonconsensual revealing photos of of women in public places–including infamous “upskirt” photos.

The brewing controversy came to a turning point last week after the infamous moderator of sub-forums “jailbait”, “creepshots”, “rape”, “incest”, and “PicsOfDeadKids” was outed by Gawker. The moderator, “Violentacrez”, was revealed to be 49-year-old computer programmer Michael Brutsch. Outing a person’s name, or “doxxing”, is one of the few things that Reddit bans outright. Thus, Reddit chose to ban all links to Gawker from the site, but later rescinded the decision. The issue has been taken up in high-profile Reddit forums like “politics” an “TIL” (“Today I Learned”). Michael Brutsch, meanwhile, lost his job.

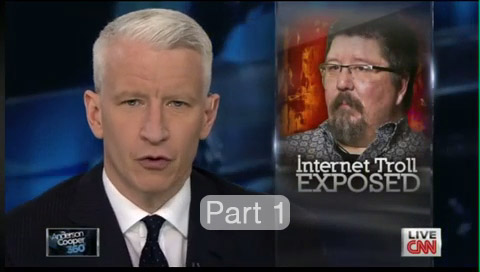

Tonight, CNN hosted an interview with Brutsch, in which talks about how he may be sorry (although it really sounds more like he is sorry he is caught) but also claims to be a fall guy. He proudly displays the trophy given to him by Reddit in the interview. After all, “jailbait” was once voted the top subreddit, and “jailbait” was among the highest search terms associated with Reddit on Google. By many accounts, it provided a great deal of traffic to the site. Brutsch himself was at one point was been moderating more than 600 subreddits including many popular and mainstream ones like “WTF” and “funny“.

In the interview, Brutsch says that Reddit encouraged and enabled this behavior and that he shouldn’t have been a part of it. He seems to have a point. According to CNN, Reddit’s response was that Reddit now “regrets having sent the trophy.” Reddit also said it banned Brutsch’s “Violentacrez” account several times since last year, adding that Reddit “regret(s) not taking stronger action sooner.” (Michael Brutsch immediately went online on Reddit under his real name and denied parts of Reddit’s assertions, such as that he had been banned).

The real question is this: “What should Internet platforms do when confronted with speech that crosses over from being merely offensive to being predatory?” If Reddit is indeed regretting “not taking stronger action sooner,” its leadership must choose a decisively different path in the future–Michael Brutsch is not the only person in the world with interest in spreading such content. Will Reddit continue to host forums dedicated to material that feature gross invasions of privacy of women going about their business in public? Will Reddit tolerate sexualizing of minors–even though its rules purport to ban it? It is clear that mere “bans” have been ineffective because Reddit relies on volunteer moderators whose interest may diverge from the intent of the ban. As Whitney Phillips observes:

“By examining not just what [Violentacrez] did, but what others allowed and in many cases encouraged him to do, it is therefore possible to see into the heart of Reddit’s administrative workings, whatever objections to the contrary its co-founders might present.”

During the “jailbait” scandal that preceded this latest wave, Reddit administrators shut the forum down. However, similar content popped up elsewhere on Reddit and it degenerated into a “don’t ask, don’t tell” policy in for moderators who were interested more in continuing their predatory behavior than protecting children. Other Reddit contributors exposed that photos of obvious minors (young girls wearing “class of 2016” high school jackets) were being posted with misleading titles designed to get around the ban (eg: “ah, look, 18+ hottie”).

We have ample examples of deeply problematic practices when Reddit takes a stance in which they essentially say, “we will let volunteers moderate their forums and not do anything unless the police knock on the door.” And as I’ve written elsewhere, starting and ending one’s analysis with “it’s just free speech,” and not analyzing harms is an abdication of responsibility. Indeed Reddit is not an absolute “free speech” environment, as noted in this insightful post by Chris Conley of the Northern California ACLU. Reddit has strong rules against outing people (and, nominally, against sexualizing minors).

What will Reddit do in practice to enforce existing rules about sexualizing of minors? How it will behave towards other predatory behavior like in the sub-forum “creepshots”? Reddit is not the only place on the Internet that one can find such forums, but Reddit should not allow and encourage such forums. Such a stance still allows a very, very broad interpretation of free speech.

What? Privileged geeks acting in ways that would shame even a stereotypical frat house? Say it ain’t so. The misogyny (and racism, and various other bigotries) of geek culture has been around for decades (geeks are surrounded by the rest of the world, after all), and high-sounding appeals to principal that just happens to enable further nastiness are mostly so much twaddle.

As long as reddit’s users and moderators keep thinking violent misogyny is OK, it will be there, and that will probably be until Conde Nast takes some kind of serious hit to earnings as a result.

What you missed in the above is mention of the balance between free speech and anonymity.

Free speech tends to be more civil when the identity of the speaker is known. If you expect to be judged by what you say, then you are more considerate of the judgement of others. We care about our reputation.

Anonymous speech has some value, when the speaker has something worthwhile to say, but expects possible harm from speaking. But there needs to be limits. Experience shows that unlimited anonymous speech tends toward massive abuse.

How to get the best, while avoiding the worse?

Relying on some sort of central authority, or the judgement of an amoral business, seems chancy.

One approach, in the case of Reddit – allow posters to be anonymous, but require moderators to be public. This protects those that need protection, but filters through a moderater more inclined to civil behavior.

That first instance of “Violentacres” should be “Violentacrez”

FIxed, thanks.

Reddit is a privately owned company, therefore the right to free speech ends as soon as you log in or post anything. They have every right to restrict your right to free speech by telling you what you can and cannot post. That they did not exercise this right demonstrates that they were either not aware of it, or simply didn’t care (I lean towards the latter, profit motive being the driving force behind any commercial entity.

If you want to post nasty, horrible things on the internet, host your own site and post it there, there is nothing stopping you and you retain your freedom of speech. Of course you probably wouldn’t get the wide readership that sites like Reddit provide, but the majority of people don’t really want to know about your odd little proclivities anyway.

There is then the bigger question of whether the culture of mysogyny, misanthropy and down right malignancy that exists on the internet is there simply because sites like Reddit don’t object to people behaving in such a way on their property. Would we have such a poisonous subculture on the web if big sites like Reddit actually exercised their right to exert social pressure on their uses by saying “Hey, not cool bro, gonna ban you for a bit so you can think about what you’re saying/posting. Come back when you’ve grown up.” Attitudes and culture can only be changed by social pressure, not by legislation, as those who’s attitude you would change will ultimately resent such legislation (and in the US such legislation would be impossible to implement anyway, due to the 1st amendment) and deliberately make their views even more extreme (even if they were not sincerely held) simply out of pique or spite.

It’s a tricky situation, which can only be resolved by the silent majority standing up and saying “We will not let this stand.”

Posting elsewhere on the Internet doesn’t change the issue. You just replace “Reddit” with the ISP allowing access to the independent site. There’s going to be some private-entity 3rd party involved somewhere.

Also, remember in this conversation, there are mixed meanings of “Reddit”. There’s “Reddit” the company, and “Reddit” the community. The company does not (can not) monitor all traffic/conversations on such a massive site. Therefore, they are unable to ban accounts / reply with “not cool bro”, based on the content of one post. The community is perfectly capable of the “not cool, bro” response, and does respond in that manner when appropriate. The answer to your question “Would we have such a poisonous subculture…?” is an obvious “Yes!”. The community is made up of millions of people, and some of them are assholes. Just as in real life, the assholes tend to congregate together into smaller and smaller subreddits/subcultures as the majority of the community persists with the “not cool, bro”s, but they don’t just disappear because you don’t agree with them.

The Internet (or Reddit) doesn’t make assholes or misogynistic/misanthropic subcultures. They exist IRL, and express themselves on the Internet, just like everyone else.